In recent years, there has been a lot of attention on hypothesis testing and so-called “p-hacking”, or misusing statistical methods to obtain more “significant” results. Rightly so: For example, we spend millions of dollars on medical research, and we don’t want to waste our time and money, pursuing false leads caused by flaky statistics. But even if all of our assumptions are met and our data collection is flawless, it’s not always easy to get the statistics right; there are still quite a few subtleties that we need to be aware of.

This post introduces some of the interesting phenomena that can occur when we are dealing with testing hypotheses. First, we consider an example of a single hypothesis test which gives great insight into the difference between significance and “being correct”. Next, we look at global testing, where we have many different hypotheses and we want to test whether all null hypotheses are true using a single test. We discuss two different tests, Fisher’s combination test and Bonferroni’s method, which lead to rather different results. We save the best till last, when we discuss what to do if we have many hypotheses and want to test each individually. We introduce the concepts of familywise error rate and false discovery rate, and explain the Benjamini-Hochberg procedure.

Also, this post is accompanied by an IPython notebook that demonstrates how these methods work in practice. We analyze free throw percentage data from the NBA to see whether there are players that perform better, or worse, playing at home versus away.

For this post, I assume you have some basic knowledge about hypothesis testing, such as

- The difference between the null hypothesis and alternative hypothesis

- Significance levels

- Type I and Type II errors

- p-values

If you want to read up on the above, the material is covered by any introductory statistics book, as well as many blog posts, e.g. [MT1] and [MT2]. On the other hand, no prior knowledge about multiple testing is necessary.

Also, we ignore all of the intricacies that come with satisfying assumptions of tests. In practice, this is often quite challenging, but our focus is on multiple testing and therefore we ignore these and pretend that we live in a perfect world.

All the material is based on the lectures and lecture notes of Stats 300C by Prof. Candes at Stanford.

Simple example: Difference between p-value and being “right”

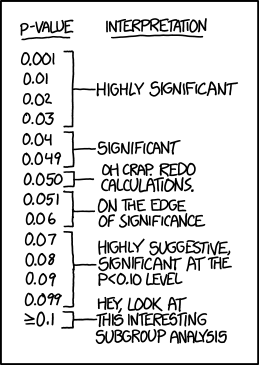

(*p-values* from xkcd)

(*p-values* from xkcd)

Much has been written about what a p-value is, and what it is not, but it seems like many people still make mistakes. Sometimes, a simple example can make all the difference and for me the following example was really enlightening.

The setup is simple, we have a single, generic, two-sided hypothesis test. For example, we could be testing that the mean of some distribution we sample from is \(0\).

We assume that our test statistic, denoted by \(Z\) follows a standard normal distribution under the null hypothesis:

\[Z \sim_{H_0} \mathcal{N}(0, 1)\]As good citizens, we gather our data after specifying our hypothesis and calculate the value of our test statistic \(Z\) and find \(Z = -2.0\) If you have ever taken a class about hypothesis testing, I’m afraid you know the critical value for such a two-sided test by heart: \(1.96\) telling you to reject the null hypothesis at the 5% significance level.

We can also compute a p-value, using the normal CDF: \(p = 2 \Phi(-2.0) = 0.0455\) where we multiply by two because we are conducting a two-sided test, and indeed, it’s slightly below \(0.05\)

So we reject the null hypothesis under a significance level of 5%. Does this tell us the null hypothesis is false? No it does not. The p-value equals the probability of seeing this test statistic, or something more extreme, if the null hypothesis is true. Hence, this value \(Z=-2.0\) was pretty unlikely to be observed if the null hypothesis is true.

From a frequentist perspective we are now done, only the data is generated randomly, the null hypothesis is either true or false, so we can’t make any more probabilistic statements. Still, we would like to know what the probability of a false rejection is, given this test statistic. If we put on our Bayesian hats and add some more assumptions, we can do just that.

First of all, we have to specify a prior on the probability that the null hypothesis is true. For our example let’s be generous and say that a priori we believe our null hypothesis has only a 50% probability of being true: \(P(H_0) = 0.5\)

Furthermore, we also have to specify the distribution of our test statistic under the alternative hypothesis. We often have little idea what this could be, so let’s say \(Z\) is uniformly distributed between \(10\) and \(10\)

\[Z \sim_{H_1} U[-10, 10]\]Summarizing, we have: \(\begin{aligned} P(H_0) &= 0.5\\\\ Z &\sim_{H_0} \mathcal{N}(0, 1)\\\\ Z &\sim_{H_1} U[-10, 10] \end{aligned}\)

And we are interested in computing \(P(H_0 \mid Z = z)\) for \(z = -2.0\)

So before we even start, the prior probability that the null hypothesis is false equals 50%, and we observe a rather small p-value. Surely, we can be fairly certain about our rejection?

Let’s find out. Using Bayes’ Theorem, and abusing notation, we obtain \(P(H_0 \mid Z = z) = \frac{P(H_0)P(Z = z \mid H_0)}{P(Z = z)}\)

Plugging in the priors, we find that we reject correctly only 50% of the time!

Even in the simplest of examples, hypothesis testing can be subtle, and here we clearly demonstrate the big difference between significance and “correctness”.

How is this possible? In the above example, observing \(Z=-2\) under the null is quite unlikely, but it’s also unlikely under the alternative hypothesis. Note that we played this completely by the book, we didn’t do anything “illegal” to make our p-value significant.

Also, note we skipped any details about what hypothesis, what data and what sample size we are dealing with, because for the purposes of this example it’s irrelevant.

A final remark before we move on: One could think that we might be able to resolve this problem by obtaining a larger data set. However, as opposed to estimation, where we expect our estimation error to shrink with increased sample size, this is not the case with the Type I error in testing procedures. All the extra information we get from an increased sample size are used to improve power: increasing the probability of correctly rejecting the null hypothesis. reducing the probability of not rejecting the null hypothesis when we actually should.

Global testing

Hypothesis testing gets even more interesting when there are multiple hypotheses that we want to test. Now, we can “borrow” information from the other test statistics to gain power, as we will soon see.

There are two types of tests:

- Global hypothesis testing: we want to simultaneously test all null hypotheses,

- Multiple testing: for every hypothesis, we want to separately test each null hypothesis.

While the latter might be more relevant in practice, the former leads to great insight and many methods used for the multiple testing problem can be related back to global hypothesis tests, so let’s look at some interesting results for the global test first.

We assume we have \(n\) null hypotheses, \(H_{0, i}\) with corresponding p-values \(p_i\) obtained as if we were only testing hypothesis \(i\) The global null hypothesis then is that all null hypotheses are true: \(H_0 = \bigcap_{i=1}^n H_{0, i}\)

There are two simple tests that, surprisingly, behave quite differently.

Fisher’s combination test

Fisher’s combination test is derived using the following insight. If \(p_i\) is uniformly distributed over \(0,1]\) then the negative logarithm follows an exponential distribution: \(-\log p_i \sim \text{Exp}(1)\)

One can show that the test statistic \(T = - 2 \sum_{i=1}^n \log p_i\) follows a \(\chi^2\) distribution with \(2n\) degrees of freedom if the \(p_i\)s are independent: \(T = - 2 \sum_{i=1}^n \log p_i \sim \chi^2_{2n}.\)

Fisher’s combination test uses the above

- Compute \(T = - 2 \sum \log p_i\) based on the individual p-values, noting that under the null hypothesis, a p-value has a uniform distribution on \([0, 1]\)

- Under the global null hypothesis, \(T\) follows a \(\chi^2_{2n}\) distribution, so we can decide to reject based on the value of \(T\)

Bonferroni’s method

Bonferroni’s method takes a completely different approach: We only look at the smallest p-value, and if this is less than \(\alpha / n\) we reject the global null hypothesis:

Reject \(H_0\) if \(\min_i p_i \le \alpha / n\)

Using a union bound, we can show that the type I error rate is less than \(\alpha\) Assuming the global hypothesis is true, we have: \(\begin{aligned} P_{H_0}(\text{Type I error}) &= P_{H_0}\left(\bigcup_{i=1}^n \{p_i < \alpha / n\}\right)\\\\ &\le \sum_{i=1}^n P_{H_0}(p_i < \alpha / n) \\\\ &= n \frac{\alpha}{n} = \alpha \end{aligned}\)

Differences

Both tests are straightforward, so which one should you use? Well, as it turns out: it depends. In certain situations, Fisher’s combination test has near full power (that is, it rejects with very high probability when the global null hypothesis is false), while Bonferroni is powerless (the probability of correctly rejecting vanishes), while in other situations, the opposite happens.

This surprised me at first, but if we take a closer look, the reason becomes quite clear. Bonferroni only considers the smallest p-value, which means it is very good at detecting when there are few large effects: the smallest p-value will most likely be from an alternative hypothesis. In fact, it can be shown that Bonferroni’s method is optimal for detecting one large effect.

On the other hand, Fisher’s combination test is not as effective in this scenario: the effect of the alternative hypotheses will be wiped out by the noise of the large number of true null hypotheses. Hence, the test won’t be able to reject the global null hypothesis in this case.

But, if there are many small effects, Fisher’s combination test really shines: all these little deviations add up and this test is able to reject the global null. While no single hypothesis provides enough evidence to reject the null hypothesis that all hypotheses are true, all the little discrepancies combined cause us to reject the null.

However, Bonferroni’s method is useless in the latter case though: because it only uses one p-value, it is unable to get enough evidence to reject the global null hypothesis. In fact, it could be very likely that the smallest p-value actually comes from a test where the null hypothesis is true!

Multiple testing

(Significant from xkcd)

Rejecting the global null hypothesis is great, but anyone’s first response to such rejection would be: so tell me, which null hypothesis is false?

For this, we need to open another bag of tricks. We still have \(n\) hypotheses, but now we want to reject hypotheses at the individual level.

Could we simply test each using the same procedure as when we are testing a single hypothesis? Suppose we are testing \(n = 1000\) hypotheses and we test at the 5% significance level. Then, we expect about 50 false positives by mere chance. That’s not very appealing. So if It turns out that our test rejects 100 hypotheses, you still can’t confidently say anything about any of the rejected hypotheses. We could of course decrease \(\alpha\) but this leads to the next problem.

Naturally, we are primarily interested in the smallest p-values. However, the interpretation of this smallest p-value as “the probability of observing a more extreme value given the null hypothesis being true” is now inaccurate. Rejecting such hypothesis at the \(\alpha\) significance level leads to a much larger type I error rate. We should take into account that this is the smallest out of \(n\) p-values, and hence this smallest p-value is not uniform on \(0, 1]\) at all, but is “skewed to the left”. Seeing something “extreme” in 100 observations much more likely than in a single observation. This leaves us clueless about the actual type I error rate.

We can look at this in another way; it is true that for every single hypothesis test, we use a procedure that leads to the probability of a type I error equal to \(\alpha\) This is a guarantee that we have before applying the method; after applying the method, there is no randomness left according to the frequentist perspective. However, when we consider the smallest p-value, we change the procedure to take additional information into account (we combine all \(n\) hypotheses into a single one, in some sense). We changed the procedure we are using, and old guarantees don’t carry over.

Familywise error rate

So, we need a different approach, which starts by defining exactly what we want to control. This is not a new problem at all, and for decades statisticians have used the Familywise Error Rate (FWER), which ensures that the probability of committing a single false rejection is bounded by \(\alpha\)

To achieve this, we can use Bonferroni’s method again: we reject null hypothesis \(H_0, i\) if \(p_i \le \alpha / n\) To show that indeed this controls the familywise error rate, we can use the exact same union bound as shown above.

However, when there are lots of hypotheses to test, this is very conservative, and leads to very few rejections, or low power: When we test hundreds or thousands of hypotheses, do we really care about making a few mistakes as long as most of our rejections are correct?

There are a few methods, such as Holm’s procedure, that are a bit more powerful, but the FWER criterion is too restrictive even using slightly better methods.

False discovery rate

So we want a different criterion, one that is less restrictive than the familywise error rate, but so that we still have tight control over the number of false rejections. When testing many hypotheses, we might be fine allowing a few false rejections, or false discoveries, as long as the majority of rejections are correct.

To make this more rigorous, let \(R\) be the total number of rejections, and \(V\) be the number of false rejections. Then we would like to make sure the fraction \(V / R\) known as the False Discovery Proportion (Fdp), is small. There is one problem with this approach though: we know \(R\) but \(V\) is unknown, so we cannot use this quantity directly.

Instead, in a seminal paper, Benjamini and Hochberg [BHQ] propose a procedure that is known as \(BH(q)\) (or Benjamini-Hochberg procedure) that ensures that in expectation, the above ratio is controlled: \(\frac{E[V]}{\max(R, 1)} < q,\) which is known as the False Discovery Rate (FDR).

The procedure works as follows:

- Sort the p-values from small to large, such that \(p_{(1)} \le p_{(2)} \le \ldots \le p_{(n)}\)

- Find the largest (sorted) p-value \(p_{(i)}\) such that \(p_{(i)} \le q \frac{i}{n}\)

- Reject only hypotheses \(1, \ldots, i\)

It is important to note that this does not always work. However, if the hypotheses are independent, then the above method controls FDR. Also, if all the null hypotheses are true, then FWER and FDR are equivalent, and because FWER is conservative, any procedure that controls the FWER also controls FDR.

The visualization demonstrates the procedure graphically. Here, we generate a set of p-values, the ones with a green stroke come from a true null hypothesis, while the ones with a red stroke are not uniformly distributed. The fill of the circles show show whether that hypothesis is rejected. While in practice most use a value of \(q=0.1\) we use \(0.2\) instead for demonstration purposes. We also report the false discovery proportion, and the “(empirical) power”, the fraction of alternative hypotheses that are correctly rejected.

While gaining popularity in scientific fields to deal with multiple testing, the FDR metric is not without its flaws either. Because we cannot divide by zero, each time we do not reject any hypothesis, this counts as an Fdp of \(0\). Therefore, as long as a method often does not reject any hypotheses at all, then this method is free to reject whatever it wants for the remaining fraction of tests and still control FDR. Consider the following two methods for testing multiple hypotheses:

Method 1: Throw a biased coin that comes up head with probability \(q\) If the coin comes up tails, don’t reject any of the hypotheses. On the other hand, if the comes up heads, reject all hypotheses.

Method 2: Throw a biased coin that comes up head with probability \(q\) If the coin comes up tails, don’t reject any of the hypotheses. If the coin comes up heads, select 1 random hypothesis and reject it.

Though both methods are completely useless, they both control FDR at level \(q\). I would argue, though, that method 2 is better than method 1. It’s better to make a single mistake than \(n\) mistakes. In general, this could lead to a false sense of “significance”. When we see a lot of rejections, we might be tempted to think: since there are so many rejections, this cannot be a coincidence, I’ve struck gold with my dataset. But recall, we can never say anything about a specific outcome.

Three comments:

- Of course the same criticism holds for FWER as well, so going back to controlling FWER does not solve this problem.

- There has been research into metrics related to FDR that do not have this problem, but controlling such metrics is more difficult [STO].

- It has been shown that if hypotheses are independent, then the Fdp is very close to the FDR when applying the BH(q) procedure.

Bayesian approach to FDR

While we don’t have the space to delve into it, it is important to note that there is also a beautiful Bayesian approach that also leads to the FDR criterion and the BHq procedure. For the interested, please refer to Lecture notes from Stats 300C or [LSI].

DIY

While many of these algorithms are easy to implement, both Python and R can do the hard work for you.

Multiple testing in Python

For python, statsmodels is able to help out.

For example, to use the \(BH(q)\) procedure, we can

import random

from statsmodels.sandbox.stats.multicomp import multipletests

# as example, all null hypotheses are true

pvals = [random.random() for _ in range(10)]

is_reject, corrected_pvals, _, _ = multipletests(pvals, alpha=0.1, method='fdr_bh')

See the documentation for more information.

Multiple testing in R

In R it is equally simple to adjust p-values for multiple comparisons. Again, focusing on \(BH(q)\) we can

pvals <- runif(10, 0, 1)

corrected_pvals <- p.adjust(pvals, method = 'BH')

The documentation has the details and a list of other available methods.

Final remarks

Hypothesis testing is a subtle and surprisingly beautiful subject. On the one hand, testing is more prevalent than ever, while on the other hand there is a big backlash against the use of p-values, especially in academia. On top of that, we rarely have access to data that has not been analyzed by others before. By gaining a better understanding of hypothesis tests and the multiple comparisons problem in particular, I feel like I have gained a powerful tool, even though I don’t use it every day. The real benefit is the awareness of the subtle dangers when dealing with statistics, where it is important to be skeptic. So next time you see a single significant p-value from a linear or logistic regression model, stop for a second and think about how significant “significant” really is.

Also, don’t forget about the IPython notebook that looks at these methods using data from the NBA if you are interested.

References

This post is based on material of terrific course Stats 300C by Prof. Candes at Stanford. In particular, the lecture notes 1, 2, 3, 6, 8, 9, and 12. Definitely have a look at them if you are interested in learning more.

- [BHQ] Y. Benjamini and Y. Hochberg - Controlling the false discovery rate: A practical and powerful approach to multiple testing (1995)

- [LSI] B. Efron - Large-Scale Inference: Empirical Bayes Methods for Estimation, Testing, and Prediction (2011)

- [MT1] Understanding Hypothesis Tests: Significance Levels (Alpha) and P values in Statistics

- [MT2] How to Correctly Interpret P Values

- [STO] J. Storey - The Positive False Discovery Rate: A Bayesian interpretation and the q-value (2003)